As a newbie to NodeJS, I went through the official documentation and managed to achieve it. However, during the course, I faced many difficulties and by referring to many of the articles, I was managed to resolve all these hurdles.

So I thought of including these problems which I faced and how to overcome them. So it would be a great help to anyone who’s exploring or trying to achieve this more easily.

For this, I will be using SQL Server 2014 on an Instance (.\SQL2K14).

1. First you need to download and install NodeJS. (https://nodejs.org/en/)

2. Install MSSQL package for Node, using the following syntax: (Use windows command prompt)

npm install mssql3. Create a file named ‘connecttosql.js’ and include the following code:

//We require mssql package for this sample

var sqlcon = require('mssql');

function GetSQLData(queryCallback){ //A callback function is taken as an argument. Once the operation is completed we will be calling this

//SQL Configuration

var config = {

user:'###' //SQL User Id. Please provide a valid user

,password:'######' //SQL Password. Please provide a valid password

,server:'localhost\\SQL2K14'

/*

Since my SQL is an instance I am using 'localhost\\Instance'.

If you have SQL installed on the default instance, it should be server:'localhost'

*/

,database: 'master' //You can use any database here

}

var connection = new sqlcon.Connection(config,function(err){

//In case of an error print the error to the console. You can have your customer error handling

if (err) console.log(err);

//Query Database

var dbQuery = new sqlcon.Request(connection);

//Purposely we are delaying the results

dbQuery.query("WAITFOR DELAY '00:00:05';SELECT * FROM INFORMATION_SCHEMA.TABLES",function(err,resultset){

//In case of an error print the error to the console. You can have your customer error handling

if (err) console.log(err);

//Passing the resultset to the callback function

queryCallback(resultset);

})

});

}

function callback (resultset){

console.dir('Results returned and printed from the call back function');

console.dir(resultset);

//Exit the application

console.dir('Exiting the Application');

process.exit(0);

}

//Calling the function

console.dir('Calling GetSQLData');

GetSQLData(callback);

/*

Once we call this function even there's a delay to return the results

you will see the next line printing 'Waiting for callback function to get invoked...'

*/

console.dir('Waiting for callback function to get invoked...');

I have provided the relevant information as comments.

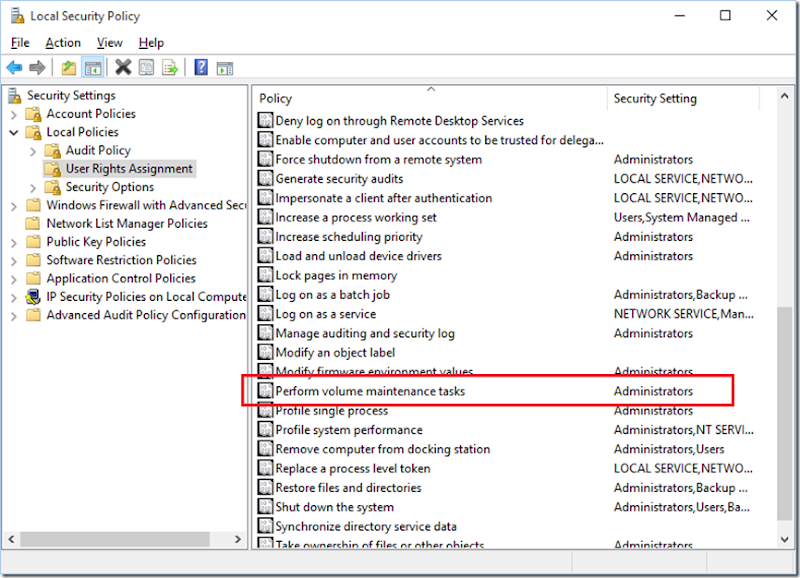

Before running the program please make sure the following configurations on the SQL server is already done:

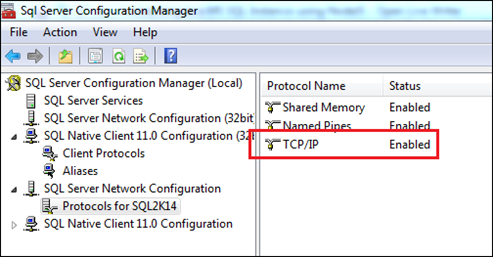

1. Enable TCP/IP Protocols in SQL Server Configuration Manager for both server and client.

Or else when running it will result an error shown below:

{ [ConnectionError: Port for SQL2K14 not found in ServerName;[YourPCName];InstanceName;SQL2K14;IsClustered;No;Version;12.0.2000.8;np;\\[YourPCName]\pipe\M

SSQL$SQL2K14\sql\query;;]

name: 'ConnectionError',

message: 'Port for SQL2K14 not found in ServerName;[YourPCName];InstanceName;SQL2K14;IsClustered;No;Version;12.0.2000.8;np;\\\\[YourPCName]\\pipe\\MSSQL

$SQL2K14\\sql\\query;;',

code: 'EINSTLOOKUP' }

{ [ConnectionError: Connection is closed.]

name: 'ConnectionError',

message: 'Connection is closed.',

code: 'ECONNCLOSED' }

2. In SQL Server Configuration Manager under SQL Server Services make sure that ‘SQL Server Browser’ service is running.

Or else when running the script it will result an error shown below:

{ [ConnectionError: Failed to connect to localhost:undefined in 15000ms]

name: 'ConnectionError',

message: 'Failed to connect to localhost:undefined in 15000ms',

code: 'ETIMEOUT' }

{ [ConnectionError: Connection is closed.]

name: 'ConnectionError',

message: 'Connection is closed.',

code: 'ECONNCLOSED' }

if the aforementioned issues are already addressed execute the above file using the following syntax in a Windows Command Window:

node connecttosql.jsYou should get a similar result which is shown below:

'Calling GetSQLData'

'Waiting for callback function to get invoked...'

'Results returned and printed from the call back function'

[ { TABLE_CATALOG: 'master',

TABLE_SCHEMA: 'dbo',

TABLE_NAME: 'spt_fallback_db',

TABLE_TYPE: 'BASE TABLE' },

{ TABLE_CATALOG: 'master',

TABLE_SCHEMA: 'dbo',

TABLE_NAME: 'spt_fallback_dev',

TABLE_TYPE: 'BASE TABLE' },

{ TABLE_CATALOG: 'master',

TABLE_SCHEMA: 'dbo',

TABLE_NAME: 'spt_fallback_usg',

TABLE_TYPE: 'BASE TABLE' },

{ TABLE_CATALOG: 'master',

TABLE_SCHEMA: 'dbo',

TABLE_NAME: 'spt_values',

TABLE_TYPE: 'VIEW' },

{ TABLE_CATALOG: 'master',

TABLE_SCHEMA: 'dbo',

TABLE_NAME: 'spt_monitor',

TABLE_TYPE: 'BASE TABLE' },

{ TABLE_CATALOG: 'master',

TABLE_SCHEMA: 'dbo',

TABLE_NAME: 'MSreplication_options',

TABLE_TYPE: 'BASE TABLE' } ]

'Exiting the Application'

I hope this will help anyone who’s using node to connect to SQL and facing the aforementioned issues.

![image_thumb[2] image_thumb[2]](https://lh3.googleusercontent.com/-n-fFIrbdqqw/Vp91GMn8jgI/AAAAAAAADmg/yqzhpO_Zz-k/image_thumb%25255B2%25255D_thumb%25255B6%25255D.png?imgmax=800)

![image_thumb[4] image_thumb[4]](https://lh3.googleusercontent.com/-rCHonaF_8ls/Vp91HvEHO1I/AAAAAAAADmw/KvL7ahgmw7Q/image_thumb%25255B4%25255D_thumb%25255B3%25255D.png?imgmax=800)